URLopener and FancyURLopener classes, this function The response headers as it is specified in the documentation forįor FTP, file, and data URLs and requests explicitly handled by legacy

To the three new methods above, the msg attribute contains theĪttribute - the reason phrase returned by server - instead of See for more detail on these properties.įor HTTP and HTTPS URLs, this function returns a This function always returns an object which can work as aĬontext manager and has the properties url, headers, and status. More information canīe found in _verify_locations(). Point to a directory of hashed certificate files. cafile should point to a singleįile containing a bundle of CA certificates, whereas capath should The optional cafile and capath parameters specify a set of trustedĬA certificates for HTTPS requests. If context is specified, it must be a ssl.SSLContext instanceĭescribing the various SSL options. Only works for HTTP, HTTPS and FTP connections. The global default timeout setting will be used). The optional timeout parameter specifies a timeout in seconds forīlocking operations like the connection attempt (if not specified, Urllib.request module uses HTTP/1.1 and includes Connection:close header Server, or None if no such data is needed. Open the URL url, which can be either a string or aĭata must be an object specifying additional data to be sent to the urlopen ( url, data=None, *, cafile=None, capath=None, cadefault=False, context=None ) ¶ The urllib.request module defines the following functions: urllib.request.

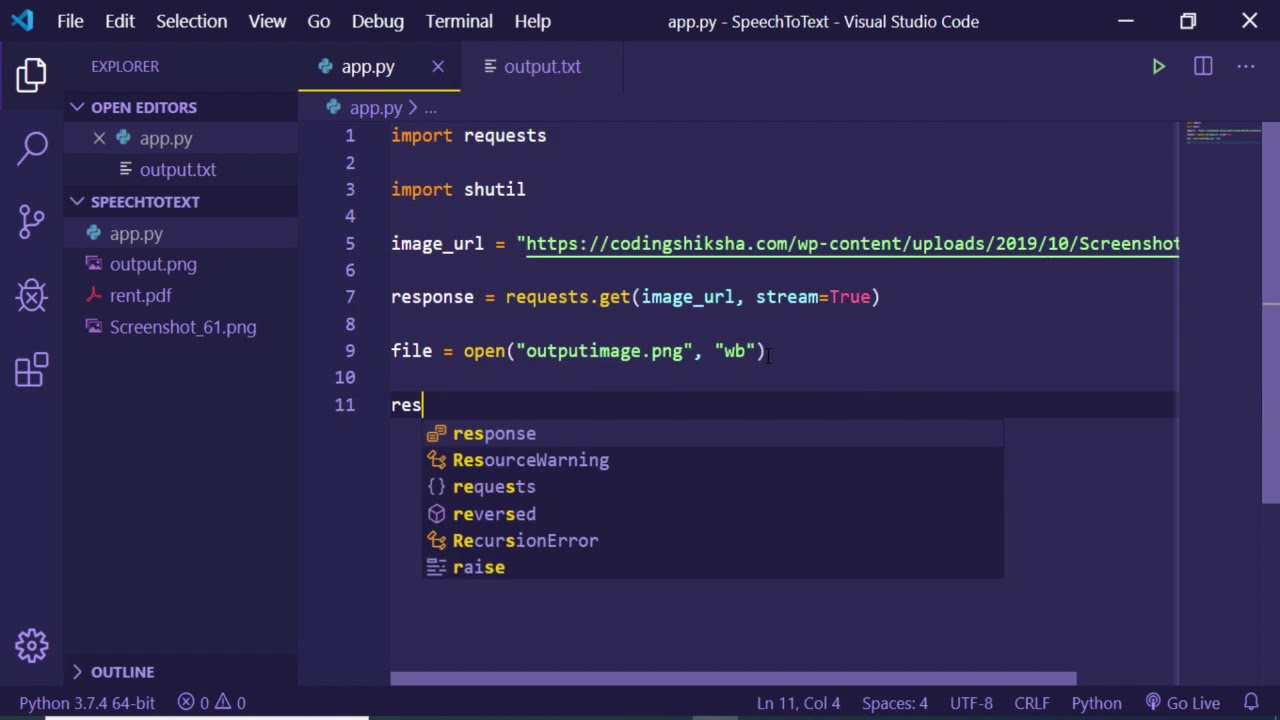

One notable exception is the URL parsing features of the urllib.Is recommended for a higher-level HTTP client interface. I’ve found the requests library to offer the easiest and most versatile APIs for common HTTP-related tasks. Final Thoughtsĭownloading files with Python is super simple and can be accomplished using the standard urllib functions. Note: The wget.download function uses a combination of urllib, tempfile, and shutil to retrieve the downloaded data, save to a temporary file, and then move that file (and rename it) to the specified location. The wget Python library offers a method similar to the urllib and attracts a lot of attention to its name being identical to the Linux wget command. That’s beyond the scope of this tutorial. Note: downloaded files may require encoding in order to display properly. This is a directive aimed at web browsers that are receiving and displaying data that isn’t immediately applicable to downloading files. When a web browser loads a page (or file) it encodes it using the specified encoding from the host.Ĭommon encodings include UTF-8 and Latin-1. There are some important aspects of this approach to keep in mind-most notably the binary format of data transfer. Instead, one must manually save streamed file data as follows: import requests However, it doesn’t feature a one-liner for downloading files. The Python requests module is a super friendly library billed as “HTTP for humans.” Offering very simplified APIs, requests lives up to its motto for even high-throughput HTTP-related demands. In other words, this is probably a safe approach for the foreseeable future. Note: urllib is considered “legacy” from Python 2 and, in the words of the Python documentation: “might become deprecated at some point in the future.” In my opinion, there’s a big divide between “might” become deprecated and “will” become deprecated. Request.urlretrieve(remote_url, local_file) Let’s consider a basic example of downloading the robots.txt file from : from urllib import request This includes parsing, requesting, and-you guessed it-downloading files.

Pythons’ urllib library offers a range of functions designed to handle common URL-related tasks. This article outlines 3 ways to download a file using python with a short discussion of each. Other libraries, most notably the Python requests library, can provide a clearer API for those more concerned with higher-level operations.